by Mark Gavin

This post continues my review of Jiri Sejtko and Jindřich Kubec’s report, on the Avast Blog, of a PDF exploit discovered by Avast.

Jiri in his post says the following: “That’s another surprise from PDF, another surprise from Adobe, of course. Who would have thought that a pure image algorithm might be used as a standard filter on any object stream you want?”

I really don’t understand the “surprise”. Plenty of people have thought about embedding non-image data into image formats. For example; Jon Bentley in Programming pearls: Cracking the oyster; August 1983 Communications of the ACM, Volume 26 Issue 8, discusses the use of bitmaps as data structures. This article has been reprinted in the Jon Bentley’s book Programming Pearls; Addison-Wesley, 1986.

The Portable Network Graphics (PNG): Functional specification. ISO/IEC 15948:2003 (E) 11.3.4 Textual information explicitly allows large chunks of text to be embedded into PNG files: “the zTXt chunk is recommended for storing large blocks of text.”

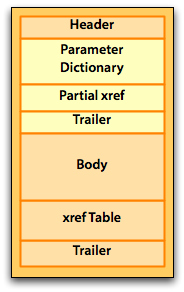

The decision to use one lossless compression algorithm over another, within a PDF file, is simply at the discretion of the developer. Compressing/encoding using multiple filters is a common practice in PDF files; and, has been a common practice since the release of PDF 1.0 in 1993. The use of multiple filters is not typically a problem for PDF parsers because the parser will look at the given list of filters and simply apply the filters to the stream. The PDF parser is not going to stop because it finds a filter in use that is not optimal for the data the parser is reading.

I think one of the problems here is the Avast Anti-Virus software does not actually parse the PDF file as a PDF file. Avast appears to simply be scanning the PDF file as a binary stream looking for specific known keys and patterns which have been discovered to be malicious. Jindřich says in one of his comments: “Properly parsing pdf is not possible, as the specs sucks and Reader accepts malformed documents.” Jindřich goes on to say: “No, we are not going to implement for example JPXDecode until absolutely needed – nobody has enough time (~money) to spend it on something that ridiculous. And we don’t claim we ‘scan pdf’, we scan just the parts of the pdf, where it has sense. When our assumptions and Adobe’s lack of common sense and skills contradict, we need to scan more.

I think there are two fundamental problems here:

1. Anti-virus applications are scanning a PDF file as a pure binary file where they assume all malicious information can be clearly seen in the open.

I have written a couple of PDF parsers; it’s not impossible to parse PDF. But, today it’s not really necessary to write a PDF parser from scratch. There are plenty of good quality commercial and open source PDF parsers available. The Adobe PDF Library, which is used as the core of Acrobat, is even available for licensing through Adobe’s partner; Datalogics. It would be relatively easy for Avast and the other anti-virus software developers to integrate an off-the-shelf PDF parser into their anti-virus software.

2. Anti-virus applications are only looking for known exploits.

Many viruses tend to be very similar and many variants of viruses are built on the same code. These variants are many times the result of the virus writers trying to get past the anti-virus software. Since the anti-virus application is only looking for known exploits using known patterns; subtle changes to the known pattern would allow malicious code to pass through the anti-virus scanning process undetected. Properly parsing a PDF file would make the anti-virus software more immune to subtle changes in the way malicious code is embedded into a PDF file.

A PDF file is a free format database file. The PDF file is not limited in the types of data it can contain; though, it typically contains information needed to represent a document. I don’t think a database programmer would consider reading the raw binary of a SQL database file when trying to do a SQL query. Why then does the anti-virus community think it can get away with scanning just parts of the raw binary of a PDF file instead of actually parsing a PDF file correctly?